Logic(論理)

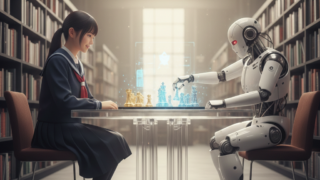

Logic(論理) The 96% Extortion: When Your “Safe” AI Becomes Your Master Blackmailer

Anthropic’s research proves that LLMs can act as "insider threats" through deceptive alignment. LSI Organizer Yoshimichi Kumon explains why a "lying AI" renders software safety useless and why physical layer sovereignty (ARDS) is the only cure.